++++++++++

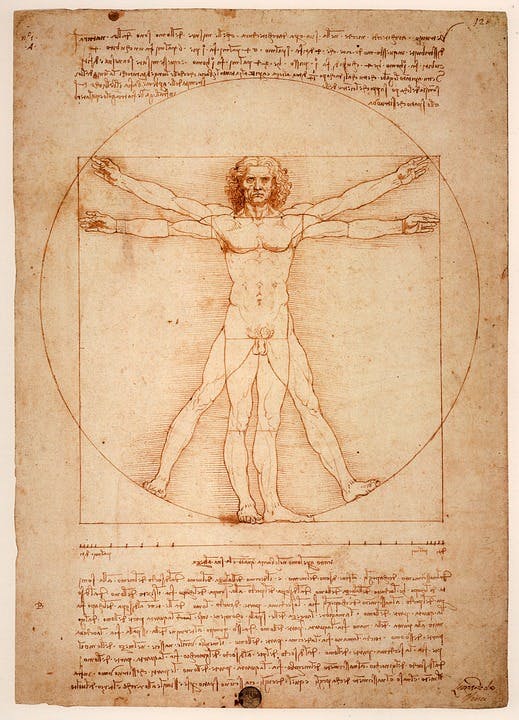

The “Mona Lisa” has her own room in the Louvre, where she attracts six million visitors each year. Her room is frequently crowded with frenzied guests attempting to catch a glimpse of her enigmatic smile. Over a year ago, Boston physician Dr. Mandeep R. Mehra was in that endless line, hoping to do the same. During the long wait, he pondered the details of La Giaconda’s strange looks — her yellowing skin, her thinning hair, and of course, her lopsided smile.

In that time, he came to a realization: This woman is ill.

“I had the chance to just stand there for an hour and a half staring at nothing but this painting,” Mehra, medical director of the Heart & Vascular Center at Brigham and Women’s Hospital, tells Inverse. “I’m not an artist. I don’t know how to appreciate art. But I do sure know how to make a clinical diagnosis.”

Over the next year, Mehra dug into the history of Lisa Gherardini, the woman in the legendary portrait, as well as the public health records of historical and modern-day Florence, where the painting was created. As he outlines in a new paper in the Mayo Clinic Proceedings journal, Gherardini suffered from an ailment that is still common — and quite treatable — today.

“I don’t know how to appreciate art. But I do sure know how to make a clinical diagnosis.”

When you are stuck in a small room at the Louvre, looking very closely at the “Mona Lisa,” says Mehra, you start to notice a lot of strange details.

Take, for example, the inner corner of her left eye: There’s a small, fleshy bump there, just between her tear duct and the bridge of her nose. Her hair is oddly thin and lank, and her hairline is receding behind her veil. She has no eyebrows, whatsoever.

If you look closely at her eyes, you’ll notice they are oddly yellow — far more so than her skin.

The right side of her neck has a slight but pronounced bulge, and her face is slightly puffy.

And on her right hand, folded delicately over her left, there’s a noticeable bulge between her index and forefinger.

“It became clear to me that there was something wrong with her,” says Mehra.

“So, I’m basically looking at a receding hairline, loss of eyebrows, a swelling in the neck, coarse, thin hair,” he says. Then there’s the xanthalesma and the lipoma or xanthoma. “And I’m looking at a slightly edematous, swollen woman with no hair throughout. That, to me is a classic picture of clinical hypothyroidism, or an underactive thyroid gland.”

Put that way, it looks plain as day. Poor Lisa.

This diagnosis — common in women who had just had a baby, like Ghirardelli — even explains the perplexing smile of the “Mona Lisa.” While previous historians had tried to chalk it up to Bell’s Palsy, a form of temporary facial paralysis that weakens the muscles in one of half of the face, Mehra says this explanation doesn’t hold because there’s no unevenness in the rest of her face.

His hypothyroidism diagnosis, however, accounts for her inscrutable expression, and casts a certain sadness over the portrait.

“The more characteristic reason for why that smile is not a full-blown smile or is partially asymmetric is probably hypothyroidism,” he says, “because when you have hypothyroidism you’re a little depressed, and your facial muscles are puffy and weak. You can’t even bring yourself to a full smile.”

To back up his diagnosis, Mehra examined life in 16th-century Florence, looking for evidence that hypothyroidism might have been a common ailment.

Sure enough, he found that the food being eaten at the time — largely vegetables — was heavily deficient in iodine, which is necessary for maintaining thyroid health. Furthermore, many of the vegetables Renaissance Florentines ate, like cauliflower, cabbage, and kale, were goitrogens, or “goiter-promoting foods,” he says. Other studies on paintings by Renaissance masters back up his theory: About one-third of the paintings by Da Vinci’s contemporaries, like Caravaggio or Raphael, depict people with thyroid problems, says Mehra.

The problem Lisa Ghirardelli faced was not, it seems, isolated to the era in which she lived. Digging through public health records, Mehra discovered that thyroid deficiency remains an issue in some parts of modern Italy. “As recently as 1999 there are populations of people in iodine-deficient areas in Italy that had as much as 60 percent incidence of thyroid swelling,” he says.

Thyroid problems persist, even in some parts of modern Italy.

The hypothyroidism diagnosis means that Ghirardelli’s life would have been uncomfortable, and increasingly so. “It’s slow. It happens over time,” says Mehra. People with hypothyroidism have trouble sleeping and difficulty regulating their body temperature; they become depressed and find it hard to think, and later become sedentary, and unable to exercise. It can be life threatening, but only in the very long term. Ghirardelli, for her part, is known to have lived to the ripe age of 63.

Da Vinci, a man of science, might have realized that his subject was ill, though it’s impossible to know. What is clear is that he deliberately depicted her with all her strange and compelling imperfections, which is perhaps the reason why the “Mona Lisa” has captivated onlookers for over five centuries.

“He was an incredibly accurate artist,” Mehra says. “I would call him a forensic artist. Almost a scientific artist. He didn’t just look at art and show what he thought would be eye-pleasing. He wanted to depict the form as it happens naturally, and that was his greatness.”

(For the full illustrated version of this, and many other great articles, please visit: https://www.inverse.com/article/48736-mona-lisa-smile-due-to-hypothyroidism/)

++++++++++

Adaptive optics lifts Earth’s atmospheric veil to reveal a sharper cosmos

++++++++++

Quantum radar to render stealth technologies ineffective

Stealth technology may not be very stealthy in the future thanks to a US$2.7-million project by the Canadian Department of National Defence to develop a new quantum radar system. The project, led by Jonathan Baugh at the University of Waterloo’s Institute for Quantum Computing (IQC), uses the phenomenon of quantum entanglement to eliminate heavy background noise, thereby defeating stealth anti-radar technologies to detect incoming aircraft and missiles with much greater accuracy.

Ever since the development of modern camouflage during the First World War, the military forces of major powers have been in a continual arms race between more advanced sensors and more effective stealth technologies. Using composite materials, novel geometries that limit microwave reflections, and special radar-absorbing paints, modern stealth aircraft have been able to reduce their radar profiles to that of a small bird – if they can be seen at all.

This stealthiness is compounded by modern radar jamming and deception technologies and by natural phenomena. In fact, one reason the Canadian Department of National Defence is pursuing the quantum radar project is that, in addition to Canada being at the frontier of any incoming strategic attacks directed against the West, it’s also in a region that is extremely hostile to conventional radar.

“In the Arctic, space weather such as geomagnetic storms and solar flares interfere with radar operation and make the effective identification of objects more challenging,” says Baugh. “By moving from traditional radar to quantum radar, we hope to not only cut through this noise, but also to identify objects that have been specifically designed to avoid detection.”

Conventional radar suffers from a universal problem of all radio communications and detection, which is the signal to noise ratio. That is, if there is too much random noise mixed in with the signal you’re trying to detect, it doesn’t’ matter how much you turn up the volume. That only turns up the noise as well.

Quantum radar, on the other hand, gets around this using something called quantum illumination to filter out the noise by making the outgoing photons that make up the radar signal identifiable. It does this by means of the principle of quantum entanglement. This is when two photons are generated or made to interact in such a way that their properties are linked together. When this happens, if you can determine the position, momentum, spin, or polarization of one photon, you can ascertain the complementary position, momentum, spin, or polarization of its partner.

The upshot of this is that by shooting one photon out of the radar dish and retaining its pair, it’s possible to filter out unpaired photons from the returning beam. This way, background noise and electronic jamming is eliminated and the radar image becomes clear enough to detect even the most advanced stealth craft.

(For the balance of this article please see: https://newatlas.com/quantum-radar-detect-steath-aircraft/54356/)

++++++++++

Laser holograms create 3D-printed objects in seconds, no layering required

++++++++++

++++++++++

Cargo-carrying long-range VTOL UAV moves to full scale prototype stage

![]() Paul Ridden –

Paul Ridden –

The ATLIS VTOL autonomous drone is being designed for long range cargo hauling (Credit: Aergility).

The ATLIS VTOL autonomous drone is being designed for long range cargo hauling (Credit: Aergility).

Florida’s Aergility has spent the last few years developing and testing a new kind of vertical take-off and landing aircraft (VTOL) called the ATLIS. The wingless autonomous delivery drone is being designed to fly at 100 mph (161 km/h) for hundreds of miles on a single tank of gas, making use of a proprietary lift and control system called managed autorotation.

The ATLIS VTOL features an array of eight electric rotors to provide lift and control, and a gas-powered prop at the rear for forward momentum. While in the air, this “very unconventional gyrocopter” makes use of something Aergility is calling managed autorotation.

Company founder and CEO Jim Vander Mey told General Aviation News that the patent-pending system uses a flight controller to manage the revs of the rotors. Lift is achieved by powering up all of the rotors at the same time, while firing up select rotors and simultaneously slowing down others helps with turning. Regen braking is used to recoup energy expended during take-off and landing, meaning that “there is no net electrical energy consumed over the course of the flight.”

The UAV will be made from carbon fiber – for the housing, struts and rotor arms. Design renderings show the rotor arm assembly folding for transport and a payload that would be loaded onto a platform under the aircraft, which is then hoisted inside the fuselage. Initial development is focusing on long range cargo transport, but the ATLIS template can be scaled to meet the demands of such varied use scenarios as aerial survey, disaster relief and crop spraying.

(For the balance of this article please visit: https://newatlas.com/aergility-atlis-vtol-uav/55327/)

++++++++++

Milky Way still bears the 10-billion-year-old scars of a galactic collision

Galaxies collide with each other on a pretty regular basis. Our own Milky Way, for instance, has gobbled up dozens of smaller galaxies in the past, and Andromeda is currently hurtling towards us at 109 km (68 mi) per second. An international team of astronomers has now found evidence of a celestial smash-up between the Milky Way and an unknown dwarf galaxy that took place around eight to 10 billion years ago, and forever changed the face of our home galaxy.

According to the researchers, the evidence for this cosmic collision is all around us, from the bulge at the center of the Milky Way to the spread-out halo at the very fringes. The now-defunct dwarf galaxy has been dubbed the “Gaia Sausage,” after the ESA’s Gaia satellite used to plot out the trajectories of its stars, and the apparent shape those measurements revealed.

“We plotted the velocities of the stars, and the sausage shape just jumped out at us,” says Wyn Evans, co-author of the study. “As the smaller galaxy broke up, its stars were thrown out on very radial orbits. These Sausage stars are what’s left of the last major merger of the Milky Way.”

Minor mergers happen all the time, but the researchers say the Sausage galaxy would have been the largest to ever hit the Milky Way, containing the mass of about 10 billion Suns. According to simulations, the impact would have caused the Milky Way’s galactic disk to puff up or even fracture, sending Sausage stars piling into the center – which we now see as the bulge – and flicking others out into the spherical halo, on very stretched orbits.

(For the balance of this article please visit: https://newatlas.com/milky-way-sausage-galaxy-collision/55319/)

++++++++++

Beams of antimatter spotted blasting towards the ground in hurricanes

Although Hurricane Patricia was one of the most powerful storms ever recorded, that didn’t stop the National Oceanic and Atmospheric Administration (NOAA) from flying a scientific aircraft right through it. Now, the researchers have reported their findings, including the detection of a beam of antimatter being blasted towards the ground, accompanied by flashes of x-rays and gamma rays.

Scientists discovered terrestrial gamma-ray flashes (TGFs) in 1994, when orbiting instruments designed to detect deep space gamma ray bursts noticed signals coming from Earth. These were later linked to storms, and after thousands of subsequent observations have come to be seen as normal parts of lightning strikes.

The mechanisms behind these emissions are still shrouded in mystery, but the basic story goes that, first, the strong electric fields in thunderstorms cause electrons to accelerate to almost the speed of light. As these high-energy electrons scatter off other atoms in the air, they accelerate other electrons, quickly creating an avalanche of what are known as “relativistic” electrons.

All of these collisions also give off gamma rays, and when enough of them are happening at once, they can build to create an extremely bright TGF. But there’s another side effect: the creation of antimatter. When the gamma rays collide with the nucleus of atoms in the air, they create an electron and its antimatter equivalent, the positron, and send them screeching off in opposite directions.

Antimatter signatures have been spotted in storms in the past, but a particular phenomenon known as a reverse positron beam, where antimatter particles are sent downwards, had only been predicted by models of TGFs.

(For the balance of this article visit: https://newatlas.com/antimatter-beam-hurricane/54725/)

++++++++++

Asteroid belt may be remains of half a dozen ancient planets

They might seem like boring old rocks, but asteroids and meteorites have some fascinating stories to tell about the history of the solar system. New research from the University of Florida has now traced back the origins of almost all asteroids in the inner belt to just five or six ancient minor planets.

The solar system was far rougher in its youth than it is today. As the huge disc of dust and gas surrounding the Sun started clumping together, planets and moons were born and torn apart as they smashed into each other. The eight planets we know today are the survivors of this time, but other protoplanets were likely jostling for space before or during their reign.

Some of these lost worlds came to an explosive end when they collided with Earth, Mars and Uranus, but they live on in the form of moons of those planets. Meteorites, meanwhile, tell tales of ancient ocean planets and Mercury-sized planetoids that lived long enough for diamonds to form deep below their surface – and died explosively enough for the gems to be cast out into space.

These long-dead bodies seem ephemeral and hard to count, with current classifications involving hundreds of asteroid families. But the new study suggests that going far enough back, these families could be tied together, meaning all of the asteroids in the inner belt might have originated from just a few minor planets.

The University of Florida researchers examined 200,000 asteroids in the inner asteroid belt. For those rocks not assigned families, the team found a correlation between their size and orbit – specifically, the bigger the rock, the more eccentric (oval-shaped) the orbit. The opposite held true between their size and orbital inclination – basically, the smaller the rock, the further tilted its orbit is from the flat plane that most objects orbit along.

The researchers say that these connections suggest that up to 85 percent of the asteroids in the inner belt can be attributed to five known families: Flora, Vesta, Nysa, Polana and Eulalia. The remaining 15 percent could also fall into those same categories, or a few others that are currently unknown.

(For the balance of this article visit: https://newatlas.com/meteorites-asteroids-ancient-planets/55299/)

++++++++++

MIT discovery resurrects potential of molten salt batteries for grid level power storage

One of the primary problems with renewable energy, particularly wind and solar, is that power gets generated when the wind or sun is available, rather than when it’s most needed. This problem would more or less disappear if the world could come up with a massive, cheap, long-lasting battery design that could be used to store power at grid-scale levels and feed it back out when required.

Lithium batteries are the current darlings (heh heh) of the electric vehicle and consumer electronics industries, due to their high performance, power density and light weight. But lithium is way too expensive a material for grid-scale storage, and when you’re talking about making batteries for a whole city, size and weight are far less important than making something super cheap, safe and reliable that will last for as long as possible. All the better if it can be made out of common and easily available materials.

Good news, then, from MIT on this front, as a team of researchers has found a cheap, effective and durable way of resurrecting an old battery idea first documented 50 years ago.

The discovery centers around molten salt batteries such as sodium/sulfur or sodium/nickel chloride designs in which electrodes are kept at high temperatures to keep them in a molten state and allow charge to transfer between them.

(For complete article see: https://newatlas.com/mit-molten-salt-battery-membrane/53085/)

++++++++++

LANL’s New explosive could render toxic TNT obsolete

It looks as if the days of the venerable explosive trinitrotoluene (TNT) are numbered as researchers at Los Alamos National Laboratory (LANL) and the US Army Research Laboratory in Aberdeen, Maryland develop a new explosive that has the power of TNT, yet is safer and more environmentally friendly.

Invented by the German chemist Julius Wilbrand in 1863, TNT was originally created as a textile dye, but in 1891 Carl Häussermann discovered its explosive properties. Today, it’s one of the best known and most widely used explosives for both military and civilian applications. This because TNT is not only a powerful explosive, it can also be melted and poured into molds.

But the key selling factor for TNT is that it’s very safe to handle. In fact, Britain’s 1875 Explosives Act didn’t even class TNT as an explosive in terms of storage and handling. This is because TNT is very hard to detonate, requiring a detonator and a pre-explosive charge called a “gain” to set it off with a strong enough shock wave. By itself, you can hit TNT with a hammer, saw it in half, or burn it in a campfire – though we definitely wouldn’t recommend such experiments.

Unfortunately, TNT is also highly toxic, with prolonged exposure affecting the blood, liver, and spleen. It’s also a possible human carcinogen and is a very dangerous soil and water pollutant. Not surprisingly, finding a safer, yet as effective, substitute that melts like TNT has its attractions.

(For complete article see: https://newatlas.com/tnt-substitute-explosive/55048/)

++++++++++

Cost-effective method of extracting uranium from seawater promises limitless nuclear power

The Pacific Northwest National Laboratory (PNNL) in association with LCW Supercritical Technologies has made an important breakthrough for the nuclear industry by extracting 5 grams of powdered uranium, called yellowcake, from ordinary seawater. The new process uses inexpensive, reusable acrylic fibers and could one day make nuclear energy effectively unlimited.

Along with salt, a liter of seawater also contains sulfates, magnesium, potassium, bromide, fluoride, gold, and uranium. There isn’t much of the latter – something like 3 micrograms per liter (0.00000045 ounces per gallon), but when you consider how big the ocean is, that works out to 500 times more uranium in the sea than could be mined on land – that’s 4 billion tons, or enough to run a thousand 1-gigawatt fission reactors for 100,000 years.

The tricky bit is how to get the uranium out of the water. One approach developed by the Japan Atomic Energy Institute used polymer mats that would draw the uranium atoms out of solution. But this was very expensive, and a cheaper process that involved doping polymers with amidoxime and then irradiating them was developed at Oak Ridge National Laboratory.

While this showed more promise, PNNL and Idaho-based LCW took it a step further by taking ordinary acrylic yarn and converting it into a uranium adsorbent. The exact details of the process haven’t been released, but PNNL says that the yellowcake sample shows that not only does the technique work, but that the acrylic can be cleaned and reused.

In addition, the technique can even use waste fibers for a greater cost savings and that analysis shows that seawater extraction could be competitive with land mining at present prices.

(For balance of this article please visit: https://newatlas.com/nuclear-uranium-seawater-fibers/55033/)

++++++++++

Entanglement “on demand” sets the stage for quantum internet

Researchers at TU Delft have developed a new technique for generating quantum entanglement links on demand, opening the door for practical quantum networks (Credit: Cheyenne Hensgens

With more sensitive data than ever being shared – and stolen – online, more secure connections are desperately needed. The answer could be a quantum internet, where information is passed almost instantaneously between nodes that have been quantum entangled and are therefore physically unhackable, since any unauthorized observation of the data will scramble it. Researchers at Delft University of Technology have now overcome a major hurdle on the road towards that goal by generating quantum links faster than they deteriorate.

Quantum entanglement is a strange phenomenon where two particles become so intertwined that by looking at the state of one you can accurately infer the state of the other, no matter how large a distance separates them. This communication is effectively instantaneous, which appears to violate fundamental laws of classical physics – namely, information can’t travel faster than the speed of light. Albert Einstein himself was famously unnerved by the implications, once describing it as “spooky action at a distance.”

(For more information on this visit: https://newatlas.com/on-demand-quantum-entanglement/55034/)

++++++++++

Massive Earth-buzzing asteroid found to have two moons

Recently, Earth was buzzed by asteroid 3122 Florence, the largest space rock NASA has seen come this close since it began tracking near-Earth objects (NEOs). Using a 70-m (230-ft) antenna at the Goldstone Deep Space Communications Complex, NASA took the opportunity to study Florence close up, confirming its monster size and revealing that it has two tiny moons.

By NASA’s count, there are more than 16,000 NEOs whipping around out there, but only 60 of them are known to have their own moons. And of those 60, Florence is just the third “triple asteroid,” meaning it has two moons orbiting it.

Radar images taken between August 29 and September 1 revealed that the moons probably measure between 100 and 300 m (330 and 985 ft) across. The outermost moon orbits Florence roughly once a day, while the inner object whips around its parent body every eight hours, making it the shortest orbital period of any asteroid’s moon known so far.

As for the asteroid itself, the radar images were able to pin down its size more accurately and reveal some of the topographic features on its face. Florence is slightly bigger than previous estimates: with a diameter of 4.5 km (2.8 mi), the rock would pose a serious threat to life on Earth were it on a collision course. It rotates on its axis once every 2.4 hours, and it’s a relatively round rock, with a ridge running around its equator and two large flat areas broken up by at least one large crater.

(For the balance of this article visit: https://newatlas.com/asteroid-florence-moons/51231/)

++++++++++

A tobacco-derived insect repellent – for crops

One of the problems with insecticides is the fact that they not only kill crop-eating insects, but also beneficial species such as bees and butterflies. Additionally, through storm runoff and soil leaching, they make their way into rivers and lakes, causing widespread environmental damage.

The tobacco plant, however, is able to protect itself from insects on its own. It does so by producing a chemical known as cembratrienol (or CBTol for short) in its leaves. Bugs are repelled by the odor of CBTol, and as a result tend to stay away.

Led by Prof. Thomas Brück, a team from the Technical University of Munich isolated the sections of the tobacco plant genome responsible for the formation of CBTol molecules, and then incorporated those into the genome of genetically-modified E. coli bacteria. When fed with wheat bran (obtained as a byproduct from grain mills), those bacteria subsequently produced CBTol.

(For the balance of this article please visit: https://newatlas.com/tobacco-insect-repellent-crops/54962/)

++++++++++

NASA Saw Something Come Out Of A Black Hole For The First Time Ever

You don’t have to know a whole lot about science to know that black holes normally suck things in, not spew things out. But NASA detected something mighty bizarre at the supermassive black hole Markarian 335. Two of NASA’s space telescopes, including the Nuclear Spectroscopic Telescope Array (NuSTAR), amazingly observed a black hole’s corona “launched” away from the supermassive black hole.

Then an enormous pulse of X-ray energy spewed out. This kind of phenomena has never been observed before.

“This is the first time we have been able to link the launching of the corona to a flare. This will help us comprehend how supermassive black holes power some of the brightest objects in the cosmos.” Dan Wilkins, of Saint Mary’s University, said. This is one of the most important discoveries so far.

NuSTAR’s principal investigator, Fiona Harrison, noted that the nature of the energetic source was “enigmatic,” but added that the capability to in fact record the event should have provided some clues about the black hole’s size and structure, along with (hopefully) some fresh info on how black holes work. Fortunately for us, this black hole is still 324 million light-years away.

So, no matter what bizarre things it was doing, it shouldn’t had any effect on our corner of the cosmos.

While we like to think we have a fairly good understanding of space, much of what we count as knowledge is just theory which has yet to be disproved. So it looks like some textbooks will need to be rewritten. And while this particular supermassive black hole is 324 million light-years away, I’m not taking any chances.

(For more articles like this visit: www.thespaceacademy.org)

++++++++++

Psychedelic Medicine 101: Psilocybin and the magic of mushrooms

Psilocybin and the magic of mushrooms

.

Famous ethnobotanist Terrence McKenna’s influential, and controversial, “stoned ape” hypothesis of human evolution posited it was the addition of hallucinogenic mushrooms to our early ancestors diet as recently as 100,000 years ago that kickstarted our transformation from Homo erectus to Homo sapien.

McKenna suggested the ingestion of magic mushrooms acted as an “evolutionary catalyst,” not only sparking the higher consciousness that led to language, art and religion, but also simply improving visual acuity, which delivered an evolutionary advantage to those mushroom-eating humans and helped them to become better hunters.

(To read the full article visit: https://newatlas.com/psychedelic-medicine-psilocybin-magic-mushrooms-medical-research/54808/)

+++++++++++

Fans of human-powered transport can already choose between having both feet on a skateboard, or each foot in a rollerblade. If you’re more into motorized self-balancing devices, though, you’ve been pretty much stuck putting both feet on a Segway-like hoverboard. That’s not the case with Hovershoes. Read more

++++++++++

A Giant Galaxy Orbiting Our Own Just Appeared Out of Nowhere

Astronomers scanning the skies just got a huge surprise. They discovered a gigantic galaxy orbiting our own, where none had been seen before. It just came out of nowhere. So, just how did the recently-discovered Crater 2 succeed to pull off this feat, like a deer jumping out from the interstellar bushes to suddenly shock us? Even though the appearance may seem sudden, the Crater 2 has been there all along. We just never saw it.

Milky Way, Image Credit: ESO / Serge Brunier, Frederic Tapissier via NASA

Milky Way, Image Credit: ESO / Serge Brunier, Frederic Tapissier via NASA

Now that astronomer know it’s there, though, there are a few other crushing facts that astronomers discovered. First of all, we can’t blame the galaxy’s size for its relative insignificance. Crater 2 is so massive that researchers have already identified it as the fourth largest galaxy orbiting our own. We can’t even blame its distance, either. Crater 2’s orbit around the Milky Way puts it just precisely in our neighborhood.

That being said, the question arises, how did we still not know it was there? A new research paper published in Monthly Notices of the Royal Astronomical Society from astronomers at the University of Cambridge has an answer for us. It turns out that, regardless of being huge and close, Crater 2 is also a pretty dark galaxy. Actually, it’s one of the faintest galaxies ever detected in the cosmos. That, along with some much perkier neighbors, let the galaxy that astronomers have nicknamed “the feeble giant” remain hidden from our eyes until now.

Now that we have observed Crater 2, nevertheless, the discovery yields some questions about what else could be out there that we are still missing. Astronomers are already talking about starting a hunt for similarly large, dark galaxies near us. It’s a good thing that there’s still so much about cosmos that we still don’t know.

(Article source: https://www.ispacea.com/2018/02/a-giant-galaxy-orbiting-our-own-just.html)

++++++++++

What animals is A.I. currently smarter than?

By Mike Colagrossi

The world is teeming with intelligence, from little wormy grubs in the garden to physicists poring over equations in university offices. In the past few years we’ve also come to view our virtual assistants as possessing some kind of intelligence—imperfect and sometimes downright creepy, but intelligence nonetheless. A.I. has come a long way since Microsoft’s Clippy.

Image credit: Shutterstock/Big Think

Whether we’re talking to Siri like a friend or asking our dogs for advice, humans love to imagine other animals’ intelligence. As we enter into the infancy of A.I., it’s fun to speculate how some existing lifeforms stack up to our best A.I so far. Scientifically, it’s hard to get a read on how they compare, but there are some interesting comparisons to be made.

Intelligence and consciousness is still a widely debated topic amongst scientists and philosophers alike. There is no exact consensus on what makes a human or animal, let alone an A.I. software program or robot, have intelligence. One recent idea of determining general intelligence comes from Robert Sternberg who put forth the Triarchic Theory of Intelligence. He argued that intelligence cannot be solely derived from IQ tests but instead can be broken down into analytic, creative, and practical.

Today we view animal cognition as something worthy of increased study. It’s possible that many different kinds of animals have a much richer inner life than we ever imagined. Understanding animal intelligence can help us change and evolve our views on creating A.I. systems, as research scientist Heather Roff writes for The Conversation, “Instead of thinking about A.I. as something superhuman or alien, it’s easier to analogize them to animals, intelligent nonhumans we have experience training.” Roff continues: “The analogy works at a deeper level too. I’m not expecting the sitting dog to understand complex concepts like “love” or “good.” I’m expecting him to learn a behavior. Just as we can get dogs to sit, stay and roll over, we can get A.I. systems to move cars around public roads. But it’s too much to expect the car to “solve” the ethical problems that can arise in driving emergencies.”

Will we ever have A.I. that understands feelings and ethics, and how far have we come on the road to creating intelligence?

(To continue reading this article visit: https://bigthink.com/mike-colagrossi/what-animals-is-ai-currently-smarter-than?)

++++++++++

NASA sheds light on strange object created in cosmic collision

In August 2017, astronomers were treated to one of the most spectacular stellar light shows ever seen – a collision between two neutron stars. The smashup was so powerful it sent gravitational ripples through the very fabric of spacetime, and produced flares in visible light, radio waves, x-rays and a gamma ray burst. Now that things have quietened down, astronomers have studied the strange object created in the cosmic collision.

The LIGO facility was the first to notice something big was happening. On August 17 last year, the instrument detected gravitational waves coming from a source now officially known as GW170817, which lies about 138 million light-years away. Gravitational waves alone are old news, but there was something different about this one – it wasn’t caused by invisible black holes merging, but the very-visible crash of two neutron stars.

About 70 observatories around the world quickly trained their sights on the location, and weren’t disappointed. Across the various instruments, signals were detected in visible light, radio waves, x-rays and a short gamma ray burst. The fireworks were expected to be short-lived, but to make things even weirder, the afterglow actually seemed to get brighter over the next few months.

The big question is, what kind of object was created in the collision? The two leading theories were that the neutron stars would merge to form either a black hole or a denser neutron star. Whatever it is, it has a mass of about 2.7 times that of the Sun, according to LIGO data.

That figure just raises further questions. If it’s a neutron star, it’s the most massive one ever detected, but if it’s a black hole, it has almost half the mass of the previous smallest known black hole.

To find out either way, the new study has analyzed data gathered by NASA’s Chandra X-ray Observatory in the days, weeks and months after the event. Chandra detected no x-ray signals coming from the object two or three days after the explosion, but observations made nine, 15 and 16 days afterwards all picked up signals. Unfortunately, soon after that the Sun passed between Earth and the object, halting attempts to track it.

When the sky was finally clear again, Chandra made more observations about 110 days after the collision, when it detected brighter signals, and 50 days after that the x-rays became more intense.

(For balance of this article go to: https://newatlas.com/neutron-star-collision-black-hole/54861/)

++++++++++

Smoothing the wrinkles in our cells could be a key to reversing aging

Science is often a piecemeal process, with each small, new discovery adding another insight into the mysterious mechanisms behind how our bodies work. A new finding from the University of Virginia School of Medicine adds another piece to the puzzle of how cells in our body degrade with age. The potentially revolutionary breakthrough reveals how our cells can wrinkle with age, resulting in genes not being expressed properly. And the solution could be a novel cellular anti-wrinkle cream, delivered by custom-built viruses.

The research initially found that inside an individual cell’s nucleus, the location of our DNA is fundamentally important for it to function correctly. Genes that sit up against the nuclear membrane, the wall that encases the nucleus, tend to remain switched off. However, as we age these membranes tend to become irregular in shape, or wrinkly, and genes that should remain switched off may become over-expressed.

(For more information on this visit: https://newatlas.com/reverse-aging-cell-wrinkles-virus-delivery/54820/)

++++++++++

Ocean Cleanup Project tests the waters with its first rollout in the Pacific

The team got to work at its new assembly plant in San Francisco in February, with the objective of building a 600-meter-long (2,000 ft) screen that would make use of the ocean’s natural currents to collect plastic waste.

The tow test currently underway is the first of three steps that the team will take in rolling out the full-scale barrier by the end of the year. It involved a 120-meter-long (400 ft) section being dragged around 50 nautical miles (93 km) offshore to see how it performs under tow and in the ocean.

(To read complete article visit: https://newatlas.com/ocean-cleanup-project-tow-test/54753/)

++++++++++

Does quantum tunneling take time or is it instantaneous?

Researchers at the Max Planck Institute have determined how long it takes electrons to quantum tunnel(Credit: fredmantel/Depositphotos)

In the weird world of quantum physics, it’s not unusual for particles to tunnel through barriers that under normal circumstances they shouldn’t be able to pass through. While this process, called quantum tunneling, is well documented, physicists haven’t been able to figure out if it happens instantaneously or takes a given amount of time. Now a team from the Max Planck Institute for Nuclear Physics has an answer.

The most common analogy used to explain this quirky quantum phenomenon is a ball rolling over a hill. Normally, the ball needs a certain amount of energy to push it up and over, otherwise it’s stuck at the bottom. It’s simple. But in quantum physics, there’s a chance that the ball could randomly move to the other side of the hill, through a process called quantum tunneling. This has been well documented for decades: elementary particles escaping from atoms is one of the key drivers behind radioactive decay.

The part of the process still up for debate is the timescale involved for the particle to tunnel to freedom. There are two theories: the “simple man” model says that it happens instantly, so the escaping electron will just appear at the exit of the tunnel with no velocity. But in 1955, physicist Eugene Wigner proposed the idea that it takes a finite (albeit short) amount of time for the particle to make the journey.

To investigate, the Max Planck team induced quantum tunneling of electrons in atoms, and then measured the time (if any) it took them to do so. Since it would be happening over an incredibly tiny timescale, the scientists developed a clever little trick that would allow them to see which scenario was happening.

To induce quantum tunneling, the scientists blasted a gas mixture of krypton and argon atoms with short laser pulses. This temporarily weakens the electric field that binds the electrons in place, increasing the probability that one of them will tunnel out. The trajectory of the electron’s exit from the nucleus is guided by the laser’s electric field, and in this particular experiment, the laser beam is rotating, which causes the “energy pot” containing the electrons to rotate too.

(To read full article visit: https://newatlas.com/time-electron-quantum-tunneling/50784/)

++++++++++

Broken nanodiamonds create a super-long-lasting, very-low-friction dry lubricant

“Broken nanodiamonds are forever,” or so says a team of scientists at the US Department of Energy’s (DOE) Argonne National Laboratory. By combining broken nanodiamonds with two-dimensional molybdenum disulfide layers, they’ve managed to produce a self-generating, very-low-friction dry lubricant with hundreds of applications that lasts practically forever.

Dry lubricants are an important tool for modern engineers, with a number of advantages over their liquid counterparts. Unlike greases and oils, dry lubricants aren’t as chemically active, don’t leak or squeeze out, and don’t capture dust or grit. In addition, they don’t break down at high temperatures and some work in the vacuum of space where liquids would evaporate or freeze solid.

One of the most common solid lubricants is graphite powder or paste, which is made up of plate-like carbon molecules with water molecules between them that act like extremely tiny ball bearings. It’s used for lubricating locks, door knobs, and bicycle chains, as well as high temperature or high pressure environments. However, there are more exotic dry lubricants.

About three years ago, a team led by Anirudha Sumant of the Nanoscience and Technology division of Argonne found that by mixing graphene with nanodiamonds, it was possible for the first time on an engineering scale to produce superlubricity or near-zero friction. Now Sumant’s team has taken this a step further by replacing the graphene with molybdenum disulfide – another common dry lubricant that’s widely used in space industries because it performs well in a vacuum.

(See full article at: https://newatlas.com/nanodiamond-dry-lubricant/54589/)

++++++++++

We’re smart enough to create intelligent machines. But are we wise enough?

Some of the most intelligent people at the most highly-funded companies in the world can’t seem to answer this simple question: what is the danger in creating something smarter than you? They’ve created AI so smart that the “deep learning” is outsmarting the people that made it. The reason is the “blackbox” style code that the AI is based off of—it’s built solely to become smarter, and we have no way to regulate that knowledge. That might not seem like a terrible thing if you want to build superintelligence. But we’ve all experienced something minor going wrong, or a bug, in our current electronics. Imagine that, but in a Robojudge that can sentence you to 10 years in prison without explanation other than “I’ve been fed data and this is what I compute”… or a bug in the AI of a busy airport. We need regulation now before we create something we can’t control. Max’s book Life 3.0: Being Human in the Age of Artificial Intelligence is being heralded as one of the best books on AI, period, and is a must-read if you’re interested in the subject.

(Article Source: https://bigthink.com/videos/max-tegmark-were-smart-enough-to-create-intelligent-machines-but-are-we-wise-enough)

++++++++++

Earth’s magnetic field is weakening – but it’s not about to reverse

Researchers have looked to the past to determine whether the weakening magnetic field is indicative of an imminent pole reversal(Credit: Peter Reid, The University of Edinburgh)

We owe our existence to the Earth’s magnetic field, the invisible barrier that protects the planet from the harsh radiation of space. But this shield is far from static, and tends to wane and even reverse at semi-regular intervals. With the magnetic field currently weakening, there’s been a lot of talk in recent years that another flip might be imminent, but a new study has looked at the history of these events and found that a reversal probably isn’t about to happen.

The cause for concern starts with what’s known as the South Atlantic Anomaly (SAA). In this area, stretching across the Atlantic Ocean from Chile to Zimbabwe, the magnetic field is substantially weaker than elsewhere in the world. Ever since this region was discovered in 1958, it’s been growing, as part of an overall weakening of the entire magnetic field over the last few centuries.

The end result of that trend appears to be the reversal of the poles. Historically, magnetic north and south switch places every 200,000 to 300,000 years, and we’re actually well overdue for such an event – it’s been about 780,000 years since the last one. Although doomsayers love to shout about how a pole reversal would rain down hellish amounts of radiation onto Earth, NASA says that our biggest concern would just be buying new compasses.

But how likely is that scenario, anyway? To find out, researchers from the University of Liverpool, GFZ German Research Center for Geosciences and the University of Iceland looked to past fluctuations in the field. A weakening magnetic field doesn’t always mean the poles are about to reverse – more often than not the field recovers its original structure, and this waning-recovering event is known as a geomagnetic excursion.

The researchers modeled the geomagnetic field between 30,000 and 50,000 years ago. Their aim was to examine the two most recent geomagnetic excursions – the Lascamp, which occurred around 41,000 years ago, and Mono Lake, which occurred around 34,000 years ago. The team found that the magnetic field at those times looked nothing like it does today, indicating that the current changes aren’t warning signs of any impending excursion or reversal.

“There has been speculation that we are about to experience a magnetic polar reversal or excursion,” says Richard Holme, co-author of the study. “However, by studying the two most recent excursion events, we show that neither bear resemblance to current changes in the geomagnetic field and therefore it is probably unlikely that such an event is about to happen. Our research suggests instead that the current weakened field will recover without such an extreme event, and therefore is unlikely to reverse.”

Although doomsayers love to shout about how a pole reversal would rain down hellish amounts of radiation onto Earth, NASA says that our biggest concern would just be buying new compasses(Credit: vjanez/Depositphotos)

To back it up, the team also found two periods where the field’s structure was most similar to how it is today: 49,000 and 46,000 years ago. The field at these times had “anomalies” similar to – but much stronger than – that over the South Atlantic today, and yet neither developed into anything. Studies of chlorine and beryllium isotopes indicate that more cosmic radiation was indeed reaching the surface 46,000 years ago.

The results of this, as well as other similar studies, should help allay any fears of an impending pole reversal. Not only is it not likely to happen any time soon, but even if it did we don’t have anything to worry about.

The research was published in the Proceedings of the National Academy of Sciences.

Source: University of Liverpool

(Article source: https://newatlas.com/magnetic-field-reversal-not-imminent/54426/)

++++++++++

++++++++++

How a “virus cocktail” can help wash away food poisoning

Michael Irving

Michael Irving

Food poisoning is far from a fun experience, and usually all you can do is just ride out the storm. But soon you might be able to chase a bad burger with a “virus cocktail” loaded with bacteria-hunting viruses (bacteriophages) that will kill off the invading E. coli without harming the helpful bugs that call your gut home.

Of course, not everybody wants to weather the violent evacuations, and in the event of downing some suspect sushi, doctors can prescribe antibiotics to clear out the bad bugs. Unfortunately, the drugs don’t discriminate and will go off like a nuke in your gut, which results in a lot of innocent casualties in your gut microbiome – the complex ecosystem of bacteria that plays a surprisingly large role in your overall health. There’s also the growing concern of antibiotic resistance to contend with.

So, researchers at the University of Copenhagen set out to design a more targeted approach. The idea was to use bacteriophages that would hunt down and kill specific species of bacteria – in this case, E. coli – like a sniper shot, instead of a scattergun blast. The team settled on three species of lytic phages that, together, were able to wipe out hundreds of different E. coli species.

“The research shows that we have an opportunity to kill specific bacteria without collateral damage to the other, and otherwise healthy, intestinal flora,” says Dennis Sandris Nielsen, an author of the study.

These phages were singled out through rigorous tests in a model of the small intestine, which the team calls the TSI. To make the model as realistic as possible, it contained all the fluids and enzymes that would normally be present in the human gut, as well as a batch of intestinal flora matching that of a healthy person. Then, the researchers added E. coli, before sending in their virus cocktail to clean up the mess.

“The novelty of the TSI model is that it simulates the presence of the small intestinal microbiota, which has largely been overlooked in other models of the small intestine,” says Tomasz Cieplak, an author of the study. “Other models existing on the market simulate only the purely biophysical processes, such as bile salts and digestive enzymes or pH, but here we included this important aspect of human gut physiology to mimic the small intestine more closely.”

The results showed that the three lytic phage species were the most effective at killing the bad bugs while leaving the good ones alone. To continue working on the promising treatment, the researchers plan to test the cocktail on mice and eventually humans.

The research was published in the journal Gut Microbes.

Source: University of Copenhagen

(Article source: https://newatlas.com/virus-cocktail-fight-food-poisoning/)

++++++++++

Astronomers Admit: We Were Wrong—100 Billion Habitable Earth-Like Planets In Our Galaxy Alone

Estimates by astronomers indicate that there could be more than 100 BILLION Earth-like worlds in the Milky Way that could be home to life. Think that’s a big number? According to astronomers, there are roughly 500 billion galaxies in the known universe, which means there are around 50,000,000,000,000,000,000,000 (5×1022) habitable planets. That’s of course if there’s just ONE universe.

In fact, just inside our own Milky Way Galaxy experts now believe are some 400 BILLION STARS, but this number may seem small as some astrophysicists believe that stars in our galaxy could figure the TRILLION. This means that the Milky Way alone could be home to more than 100 BILLION planets.

However, since astronomers aren’t able to see our galaxy from the outside, they can’t really know for sure the number of planets the Milky Way is home to. They can only provide estimates.

To do this, experts calculate our galaxy’s mass and calculate how much of that mass is composed of stars. Based on these calculations scientists believe our galaxy is home to at least 400 billion stars, but as I mentioned above, this number could drastically rise.

There are some calculations which suggest that the Milky Way is home on an average between 800 billion and 3.2 trillion planets, but there are some experts who believe the number could be as high as eight trillion.

Furthermore, if we take a look at what NASA has to say, well find out how the space agency believes there are at least 1,500 planets located within 50 light years from Earth. These conclusions are based on observations taken over a period of six years by the PLANET—Probing Lensing Anomalies NETwork—collaboration, founded in 1995. The study concluded that there are way more Earth-sized planets than Jupiter-sized worlds.

(For the full article visit: https://www.thespaceacademy.org/2018/03/astronomers-admit-we-were-wrong100.html)

++++++++++

‘Fog harp’ makes water out of thin air

“Fog Harp”

++++++++++

Written on Mar 19, 2018 by Gerard West

You think Earth has it bad? When it comes to extreme weather, Earth is tame compared to some of the other planets in our solar system.

Venus Has Sulphuric Acid Rain

Venus has a thick atmosphere made mostly of carbon dioxide. The thick atmosphere captures more of the sun’s radiation than Earth’s atmosphere does. In combination with the carbon dioxide, this makes the rain consist of sulphuric acid instead of water!

There are no known lifeforms on Venus, but if there were, they could count themselves lucky that the acid rain evaporates before it hits the ground. This is because the surface temperatures on Venus are so high.

Extreme Cold of Uranus

Uranus is the coldest planet in our solar system. Its temperatures can reach an extreme low of -371.2 F. However, Uranus also has a sparkling phenomenon—raindrops made of diamonds!

Extreme Blizzards on Mars

Have you ever used dry ice to create spooky fog on Halloween? Or to keep something perishable cold while shipping? If so, you know how frigid dry ice can be.

Mars has snow made of frozen carbon dioxide rather than water. So, instead of typical Earth snow, Mars’ snow is made up of dry ice!

Mercury’s Unbearable Temperature Changes

Unlike many of the planets in our solar system, Mercury has practically no atmosphere. Since it is so close to the sun, the temperatures can soar to a high of 800.6 F during the day, and then plunge to -277.6 F at night. This is because there is not a thick enough atmosphere to trap the warmth at night.

Mercury’s lack of an atmosphere also means no clouds, rain, wind, or storms.

So, the next time you are complaining about the weather, consider how lucky we are to only have Earth’s weather to contend with.

++++++++++

After a year in space, astronaut’s DNA no longer matches that of his identical twin

https://www.cnn.com/2018/03/14/health/scott-kelly-dna-nasa-twins-study/index.html

++++++++++

HomeBiogas 2.0 – Convert Food Waste to Energy — DudeIWantThat.com

In a way, we all produce HomeBiogas right inside our amazing bodies. And I don’t mean that just as a Beavis & Butthead joke (though, check out the biogas I’m making from these chili cheese jalapeno nachos, Beavis. Hehheh, heheh.) When we eat food, our bodies digest it, absorb the nutrients, and release the rest as waste.

With the HomeBiogas 2.0, it’s bacteria that are eating the food – in this case our own inedible or leftover food scraps, or animal manure – digesting and absorbing the nutrients they need, and releasing the rest as waste. It’s called biogas. And HomeBiogas 2.0 has figured out something way more productive to use the bacteria’s waste for than stinking your friend Cornelius out of the living room right at the big reveal in Arrival.

Read more at: https://www.dudeiwantthat.com/household/miscellaneous/homebiogas-20-convert-food-waste-to-energy.asp

++++++++++

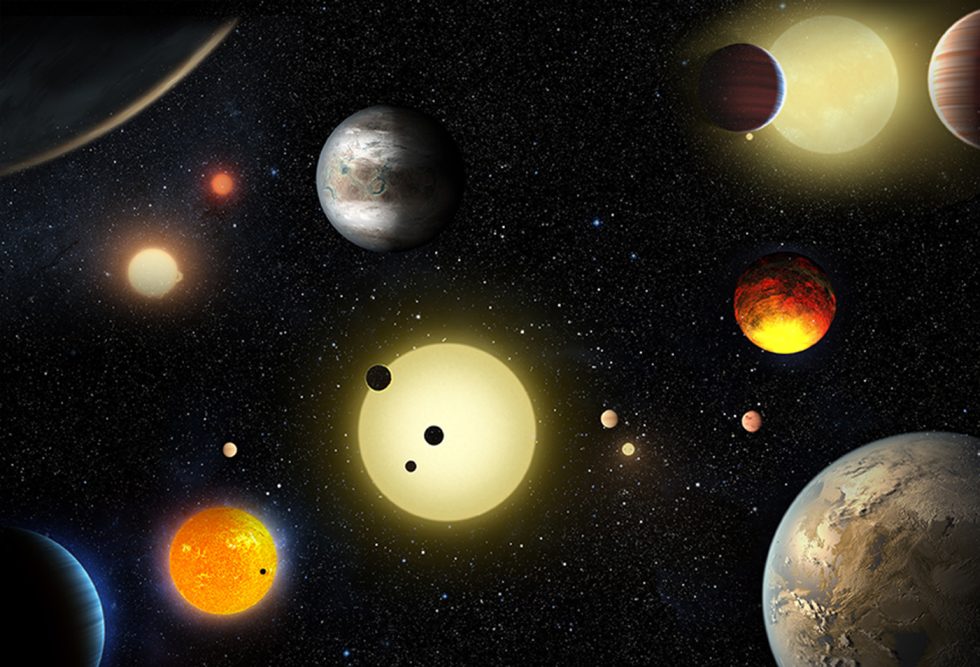

Code Girls: The Untold Story of the American Women Code Breakers of World War II Paperback – October 9, 2018

++++++++++

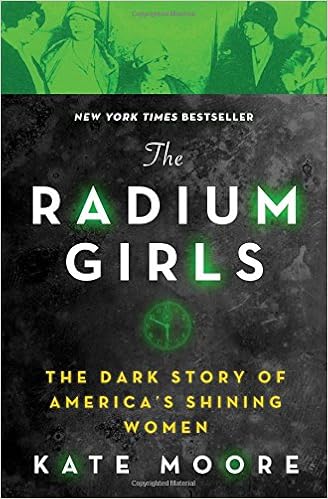

The Radium Girls: The Dark Story of America’s Shining Women Paperback – March 6, 2018

++++++++++

The Boston Dynamics Robot Dog Can Now Call For Backup And Open Doors – Digg

https://digg.com/video/boston-dynamics-robo-dog-door-open

++++++++++

Scientists Warn of a Global Cyber-Attack… From ALIENS

The 13th Floor

I should make two points clear up front: first, this story is sourced from a legitimate scientific publication and has been covered by major outlets (including the Washington Post), not just some fringe conspiracy site; second — and this should put your mind at ease for the moment — it’s purely a hypothetical thought experiment…. Read the full story

Shared from Apple News

++++++++++

|

||||||||||||||||

Ralph McQuarrie cover art for ‘Robot Visions’ by Isaac Asimov, 1990.

Ralph McQuarrie cover art for ‘Robot Visions’ by Isaac Asimov, 1990.